YouTube just opened auto-dubbing in 27 languages to every creator on the platform — and 6 million people are already watching 10+ minutes of auto-dubbed content daily. The question isn’t “should I dub?” anymore. It’s “how good is AI dubbing, really?”

If you’re a creator weighing AI dubbing against hiring voice actors, you’ve probably heard everything from “it’s 95% accurate” to “it kills your personality.” Neither is the full picture. By the end of this post, you’ll understand the 5 real quality dimensions where AI and human dubbing differ, know exactly when AI is good enough (and when it’s not), and have a practical hybrid workflow that hits the sweet spot.

One thing upfront: quality depends on your content type, language pair, and willingness to edit — not on a single “AI is better/worse” label.

1. AI dubbing costs 50–75% less and delivers in minutes, but drops quality on emotional and culturally nuanced content.

2. Human dubbing wins on performance depth and expressiveness but doesn’t scale for multi-language creator workflows.

3. A hybrid approach — AI first pass + sentence-level editing — consistently hits a strong quality-to-cost ratio for creators.

Most AI dubbing comparisons say “AI is improving” without defining what “quality” actually means. That’s like saying “the camera is better” without telling you — better for photos, video, or low-light?

The VOX-DUB benchmark — the first open, human-evaluated AI dubbing quality benchmark — breaks this down. Using 30,240 blind judgment instances across 4 commercial AI dubbing systems (ElevenLabs, Minimax, Deepdub, and Dubformer), it scores quality across 5 specific dimensions:

1. Pronunciation accuracy — Are words and names said correctly?

2. Naturalness — Does the speech flow like a real conversation, or does it sound stilted?

3. Audio quality — Is the output clean, free of artifacts and distortion?

4. Emotional accuracy — Does the dubbed voice carry the same feeling as the original?

5. Voice similarity — Does it sound like you (or at least close)?

Here’s why this matters: a single “quality” score is misleading. AI can score well on 3 out of 5 dimensions and still sound off — because emotion and naturalness carry outsized weight in how viewers experience your content.

Think of it this way: an AI dub of a tutorial might score 4/5 on pronunciation and audio quality, but a comedy sketch might score 2/5 on emotion and naturalness. Same tool, very different results.

Understanding these 5 dimensions helps you predict where AI will work for your content — and where it won’t. AI Dubbing Quality Control Checklist

The VOX-DUB benchmark tested 4 commercial systems across 2 languages. Here’s how each dimension stacks up against professional voice actors.

A few things stand out here:

Emotion is AI’s biggest gap. The VOX-DUB data shows that when AI systems try to boost emotional expression, they introduce audio artifacts — a quality/emotion trade-off that hasn’t been solved yet. This is why personality-driven content still sounds off in AI dubbing, even when every word is technically correct.

Note: In the VOX-DUB benchmark results, ElevenLabs stands out as a leading performer among the tested systems. And GoodDub uses ElevenLabs for its TTS infrastructure.

AI shines with structured content. Pronunciation accuracy and audio consistency — AI’s strong suits — matter most for tutorials, how-to videos, and informational content. Meanwhile, human strengths like emotion and naturalness matter most for personality-driven and entertainment content.

Voice similarity is the fastest-moving dimension. YouTube’s Expressive Speech feature (launched February 2026, powered by Gemini) replicates a creator’s pitch, intonation, and energy across 8 languages. This is changing what “AI dubbing” sounds like — fast.

Quality is only half the decision. What about the numbers?

The cost ranges:

· AI dubbing: $10–30 per minute of content (Verbolabs 2026 data; Checksub pricing guide)

· Human dubbing: $20–75+ per minute, depending on studio tier and language — basic professional runs $20–40/min, broadcast-quality $50–75/min (Verbolabs; Checksub)

· That’s roughly a 50–75% cost reduction on paper.

The turnaround gap: AI delivers a first draft in minutes. Human dubbing takes days to weeks — casting, scheduling, recording, mixing.

But here’s what most comparisons skip: raw AI output isn’t a finished product. You still need to edit for emotional accuracy, timing, and cultural nuance. So the honest comparison is “AI generation cost + your editing time” vs “human-only cost.”

Take a 10-minute video dubbed into 3 languages:

· AI route: ~$30–90 for generation + ~2 hours of sentence-level editing = under $200 all-in (valuing your time)

· Human route: ~$600–2,250 per language for voice actors + studio time + mixing (mid-range professional rates)

Even with editing time factored in, the cost gap is substantial for multi-language creator workflows.

The YouTube auto-dubbing pilot tells the story:

· Jamie Oliver’s channel tripled views after implementing multi-language audio.

· Mark Rober averaged 30 languages per video during the pilot.

· Culinary and entertainment channels saw up to 3x growth in international viewership.

· Pilot creators saw 25%+ watch time from non-primary language viewers.

These results came from AI dubbing — not flawless, but good enough to unlock audiences that weren’t watching at all before.

AI dubbing quality has improved dramatically, but results vary by language, accent, and content style — emotional or jargon-heavy videos will still need sentence-level review to sound right.

How to Dub Your YouTube Videos into Multiple Languages

Here’s a practical framework. Find your content type and see where AI dubbing lands:

Your audience’s expectations matter too. A tech tutorial viewer will tolerate minor AI artifacts far more than a comedy audience expecting precise timing. Ask yourself: did your viewers come for information or for performance? That answer should guide your choice.

The budget axis: If your real choice is “AI dub in 5 languages” or “human dub in 1 language,” most creators gain more from reach. This is especially true for informational content where AI already performs well.

YouTube’s Expressive Speech feature (February 2026, Gemini-based) specifically targets the emotional delivery gap. If you’re using YouTube’s auto-dubbing, this may bump “Vlogs / personality-driven” from Medium to Medium-High for the 8 supported languages: English, French, German, Hindi, Indonesian, Italian, Portuguese, and Spanish. Worth testing if your content falls in that middle zone.

If you’ve read this far, you already know neither option is universally better. So what do creators actually needs to do in practice?

Just go hybrid: use AI for the bulk of sentences it handles well, then focus human effort on the ones that need it — emotional beats, cultural references, timing-sensitive moments.

When you review your AI-dubbed output, focus on these four areas — ranked by how much they affect what your viewers actually experience:

1. Emotional accuracy — Does the dubbed line carry the same feeling? The VOX-DUB benchmark found this is where AI systems score lowest. A flat delivery on an excited moment breaks the experience instantly. Listen specifically to the first and last sentence of each section — they carry the most emotional weight.

2. Timing and lip-sync — Does the sentence fit the speaker’s mouth movement? Mismatched timing is the most visually obvious artifact and the first thing viewers notice in personality-driven content.

3. Pronunciation — Proper nouns, technical terms, brand names. AI often stumbles on these, especially in non-English target languages where transliteration rules vary.

4. Cultural references — Idioms, humor, local context. A literal translation of a joke rarely lands. If you used a culture-specific reference in the original, flag that sentence for manual review before you even listen to the AI output.

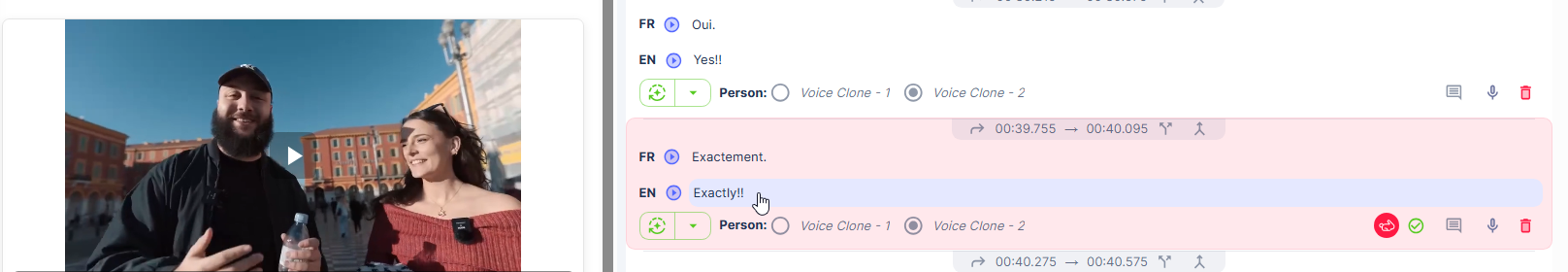

In GoodDub, after AI generates your dubbed video, you can click into any sentence and re-record, adjust timing, or swap the take. This means you only spend human effort on the sentences that need it — not the entire video.

GoodDub turns the AI draft into a sentence-level editable tracks, so you control quality through process — not luck.

The hybrid approach gets you closer to human-level quality at a fraction of the cost and turnaround — and you keep full control over the result.

GoodDub sentence-level editor page: GoodDub Studio

Neither AI nor human dubbing is universally “better” — the right choice depends on your content type, your audience’s expectations, and how much editing time you’re willing to invest. For most creators, the practical answer isn’t “AI or human” — it’s “AI + targeted editing.”

AI dubbing will keep improving. But today, the creators getting strong results are the ones who treat the AI output as a first draft, not a final product.

Try GoodDub free — upload a video, see the AI draft, and edit any sentence yourself before you decide.